We are pleased to announce that LayerStack Load Balancers are now available in Hong Kong, Singapore and Tokyo. With our Load Balancers, you can maximize the capabilities of your applications by distributing traffic among multiple cloud servers regionally and globally.

Why do you need load balancers?

Over the course of my career, I’ve learned that one tenet of work efficiency is sensible delegation. The same applies to cloud servers.

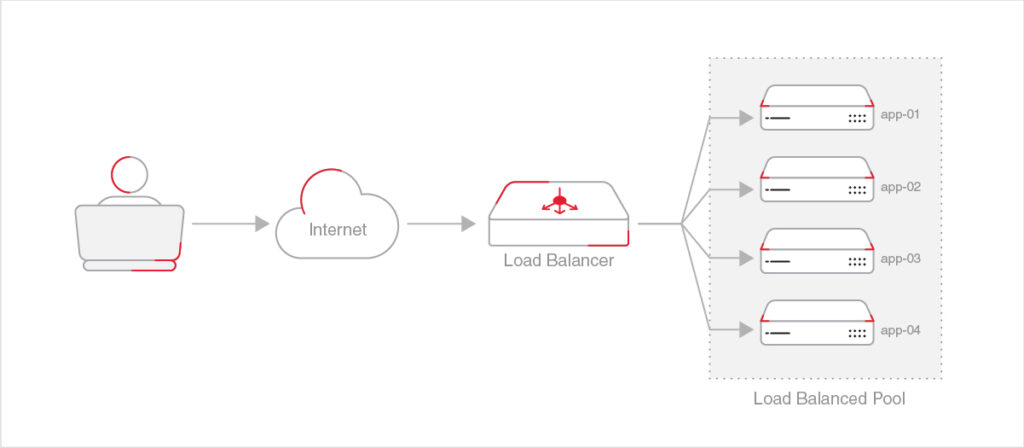

At its core, load balancers are traffic controllers that distribute the incoming flow of data to a pool of servers. Spread workload means no one server bears too much traffic at any given time, providing the best insurance against unsought speed drags or service downtime.

This is, however, just the beginning. Tactful traffic direction opens doors to countless possibilities. Maintaining high service availability and scaling across regions are just a few of many common use cases, and we will get to them in a minute.

Use case 1: Spread workload for scaling

The dynamic traffic routing by the load balancers creates a distributive system that handles varying workloads at maximum efficiency. The balancer directs inbound flow to available cloud servers for stable, responsive web performance, making it ideal for quick horizontal scaling – whether in response to sudden traffic surges or deliberate business expansions.

In the LayerStack control panel, you can choose from three algorithms with which the load balancers decide how to route the traffic, each coming with its own benefits:

Round-robin: The most common algorithm where available servers form a queue. When a new request comes in, it is handled by the server at the top of the queue. Upon the next request, the balancer goes down the server list and assigns it to the next server in the queue. When it reaches the bottom of the list, the next request is directed to the first on the list again. It is the simplest and the easiest algorithm to be implemented.

Least connection: Just like when you need help, you won’t reach out to that one colleague with the most pressing deadlines to meet (hopefully). Under this algorithm, the balancer keeps track of the current capacity of each available server and assigns new requests to the one with the fewest active connections. This mechanism is more resilient to heavy traffic and demanding sessions.

Source-based: Workloads are distributed according to the IP address of the incoming requests, giving you the flexibility to group certain application-specific tasks together, or slightly tailor the environment optimal to certain requests.

Use case 2: High availability with health check

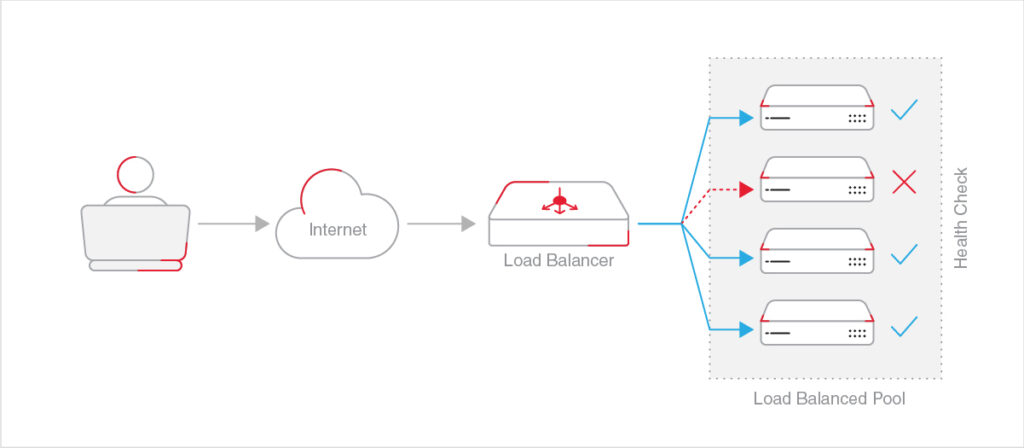

While the load balancers do fantastic jobs in withstanding volatile traffic patterns, they are equally good at diminishing system failures.

The load balancers perform periodic health checks on all servers available and route requests only to the healthy ones until the issue is resolved. It naturally provides a failover mechanism that prepares you for the worst.

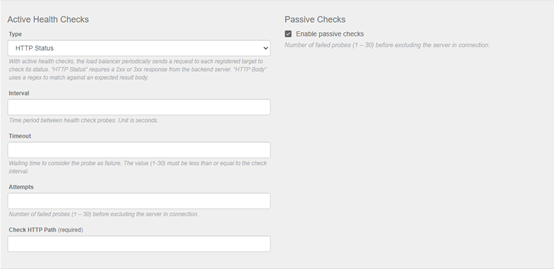

Customizing the health checks to your liking is quick and easy. Simply tweak the parameters in the Settings section of the Load Balancers in the control panel:

Use case 3: Distribute traffic across multi-regions

Similar to how failover works, load balancers are great ways to scale your applications geographically, given they can direct incoming data flow to various cloud servers across different regions. All you need to do is to have your infrastructure – web servers, databases, load balancers – and private networks replicated and set up in different locations. Load balancers will distribute inbound requests to servers in the corresponding locations for optimal performance, all while carry out scheduled health checks to achieve overall stability.

Let nothing stop you!

These are just a fraction of possible examples where load balancers can be helpful. Get creative and explore more use cases that fit your specific needs!

Setting up load balancers is pretty fool proof, but we also understand that a detailed step-by-step tutorial always comes in handy. Please click here for more details.

If you have any ideas for improving our products or want to vote on other ideas so they get prioritized, please submit your feedback on our Community platform. Feel free to pop by our community.